Project: Galath3a Germany, Berlin

Participatory installation for Human-Robot Interaction (HRI), exploring trust through spatial configuration and consent between humans and robots

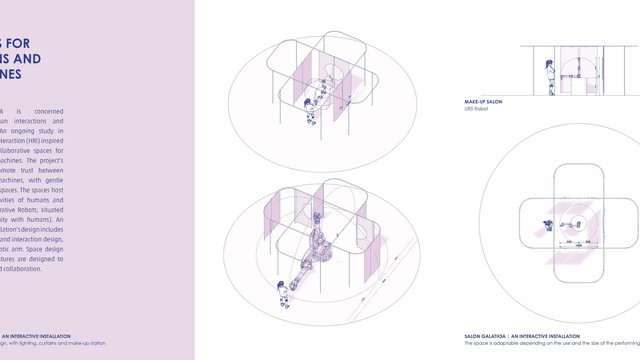

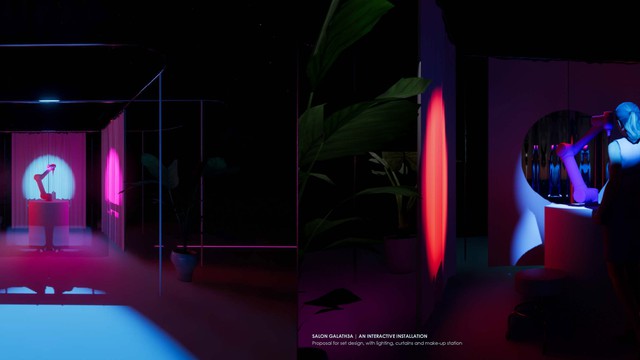

Project:GALATH3A is concerned with post-human interactions and environments. An ongoing study in Human-Robot Interaction (HRI) inspired a design of collaborative spaces for humans and machines. The project’s aim is to promote trust between humans and machines, with gentle touch and safe-spaces. The spaces host co-creation activities of humans and CoBots (Collaborative Robots; situated in close proximity with humans). An interactive installation’s design includes a spatial design and interaction design, with a UR5 robotic arm. Space design and robotic gestures are designed to support trust and collaboration.

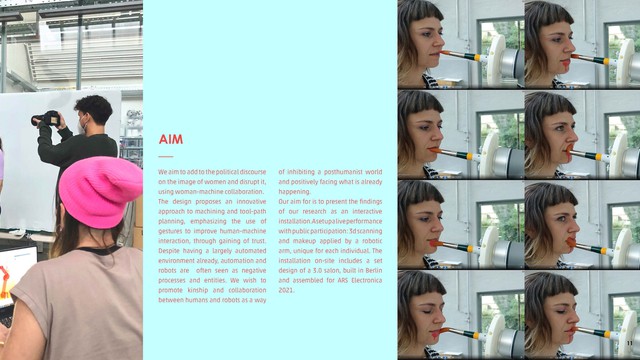

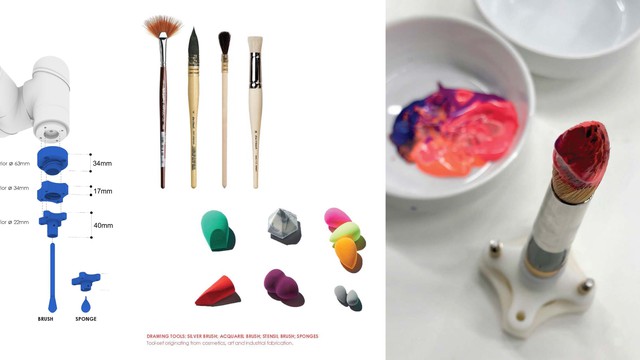

Project:GALATH3A is an ongoing study in Human-Robot Interaction (HRI), aimed at improving collaboration and increasing trust between humans and machines. Albeit its proven advantages, growing technological acceleration is met with suspicion. An increase in efficiency and workers safety is countered by fears of human redundancy, machine error and a dreaded power-shift from humans to robots. Addressing these fears is critical to adapt to a life in a post-humanist society: living and working with robots and AI. Learning from the social power-shift advocated by the #metoo movement, we find its consensual discourse advantageous for HRI design. Consent and understanding are needed in building trust between the overpowered and the underpowered, be it gender, organic or mechanic. As designers working with robots, we wish to promote kinship and collaboration. This project proposes a novel approach to HRI, encompassing path-planning in robotic fabrication and the design of safe-spaces, for human-robot collaboration. In a process we call Robotic Mirroring, human-gestures are studied, recorded and translated into machine tool-path. These gestures are used in close collaboration with humans and CoBots (Collaborative Robots; situated in close proximity with humans). The project proposes design of collaborative spaces, hosting HRI. It resonates therapeutic and meditation spaces to foster humans needs and fits with robotic arms’s technical requirements and motion-range. Spatial modularity, via metal frames, tracks and moving curtains, allows multiple humans and robotic arms of varied scales to co-create. The design proposal focuses on Responsive Collaboration: real-time robotic responses to humans. It is evaluated with an interactive installation for public participation. At its focus is the act of applying makeup by another, as It requires a great deal of trust. Hacking makeup is also chosen for its synonymous with the male gaze, now reversed: relating to reversal of powers in HRI. The digital design process includes Hi-Res facial-scans, bespoke parametrically designed makeup patterns in Rhino, GH; their application with a UR5 robotic arm and bespoke, 3d printed end-effectors. The name of the project relates to the myth Galatea: the image of the perfect woman created by sculptor Pygmalion, for his pleasure. We wanted to reverse this relationship. Here, the instrument is regarded as equal and is invited to partake in co-creation.

https://online.fliphtml5.com/pogqc/pgih

Details

Building or project owner : Project: Galath3a

Architecture : Project: Galath3aBerlin

Project artist/ concept/ design/ planning : Gili Ron and Irina Bogdan

Project co-ordination : Project: Galath3a

Interaction design/ programming : Project: Galath3a

Project sponsor/ support : Berlin Open Lab, Universität der Künste (UDK), Berlin

Descriptions

Facade type and geometry (structure) : The project proposes design of collaborative spaces, hosting Human-Robot-Interaction (HRI). It resonates therapeutic and meditation spaces to foster human needs and fits with robotic arms’s technical requirements and motion-range. Spatial modularity, via metal frames, tracks and moving curtains, allows multiple humans and robotic arms of varied scales to co-create.

Urban situation : Project:Galath3a speculates on a near future when automatons and humans co-create and co-inhabit spaces. In an urban sense, this proposal relates to design trends of Industry 4.0: the move of small and medium scale industries back into the city. This includes clean fabrication tools, such as 3D printers and robotic arms, alongside a growing trend of robots for home-use. Work and work-tools reinstated at home raise questions about the changing notions of “work”, “tools'' and our relationship with it. These shifts alter the urban environment, merging once zoned land uses and public with private.

Description of showreel : Filmed at the Berlin Open Lab (BOL), Universität der Künste (UDK), Berlin, it features live footage of a designed tool-path painting a human face The robotic arm is mounted with a 3D printed end-effector, designed to hold a brush. Raising questions about sexuality, gender biases, and human-machine kinship, the initiative focuses on “the act of applying makeup and hacks it because it is synonymous with the notion of “perfection”, the commoditization of the woman-body and the male gaze”. In fact, it includes Hi-Res 3D facial scans and digital design of bespoke painting-patterns. The robot arm is taught how to carefully approach humans, and paint their faces. To build trust between people and machines, gestures are introduced into the machine-path; and with repetition, simulation, and gentle touch, trust is gained.

Participatory architecture & urban interaction

Host organization : Project:GALATH3A is an ongoing design research in woman-machine collaboration, performed at the Berlin Open Lab, Universität der Künste (UDK), Berlin.

Issues addressed : Project:GALATH3A is concerned with the emerging need of addressing ethics in technology by analyzing and acknowledging gender and racial biases in existing data structures. We use human-robot interaction (HRI) and data analysis to build up and speculate on future post-human discourses. The project relates to women's image in the digital age and uses robotic fabrication, to speculate on what is culturally considered as "womanly behavior". Our aim is to improve communication and collaboration through trust and consent, in HRI and in human discourse. We propose an innovative approach: in a process we call Robotic Mirroring, human-gestures are studied, recorded and translated into machine tool-path. With the robot as mimic, Mirroring lets us ask questions about the nature of these behaviors: are they necessary? Should it be passed on? If so, how could we enhance it to empower women?

Impact : Our aim is to make our research endeavor public in multiple channels and address coded biases. To avoid gender, racial and other biases in data and design, multiple tests with varied participants are necessary. We aim to reach diverse populations by taking the research out of the lab and into the public sphere. To do so, we propose the design of a portable installation and mini-lab ("mobile art laboratory"= MALA), hosted in public events like fairs, festivals and conferences. This is advantageous also in exposing wider audiences to HRI and addressing human bias, against machines. By exposing the public to cutting edge-tech, we want to address stereotypes concerning facial recognition, robots and AI. We intend to create an equitable approach and move away from the ongoing discourse of biased information currently available and used by machine learning algorithms and AI.

Tools developed : Project:Galath3a’s technological ambition is innovative in its use of numeric fabrication tools: promoting societal discourse and addressing future challenges of HRI, in gesture communication. This use of CAD-CAM is contrasted to widespread use of robotic arms: working a non-regular surface (skin and bones), responding to moving subjects and performing human-like, expressive motions. Originative use of 3D scans for tool-path planning is advantageous in Hi-Res execution. It allows a continuously updated dataflow. Our intended performance of the robotic arm on multiple users at once has, to the best of our knowledge, never been done before.

Tools used : Project:Galath3a constitutes of a multitude of cutting-edge technology. Robotic fabrication with UR5 robotic-arm, path-planning in Rhinoceros 3D, Grasshopper 3D and Robots plugin for GH; photogrammetric 3D scans, mesh analysis with 3D Zephyr, digital makeup patterns in Rhino-GH; 3D printed robot end-effectors for makeup tools (FDM; SLS), graphic and video editing in Adobe/ Affinity creative suite. The work is simulated and tested on our faces.

Next steps : To test our design proposal in HRI, public-participatory interactive installations("mobile art laboratories") are proposed to observe human reactions, live. Currently, robotic fabrication is programmed to fit with the features of the researchers. To increase our scope and execute multiple bespoke designs and fabrications for every new participant, we are looking to improve the data transfer between tangible to digital. We wish to include real-time scanning and work with a 3d scanner mounted on the robotic arm. Live data stream would increase the speed of the process while enhancing the participatory nature of the experience. Later, we wish to use live-scanning to further inform robotic gestures. Then we aim for two-way communication, in movement: responding to inputs of facial expressions and bio-data. This will culminate with a language of gestures, responding to participants' signals.

Mediacredits

Project: Galath3a

Project: Galath3a

Project: Galath3a

Project: Galath3a

Project: Galath3a

Project: Galath3a

Project: Galath3a

Project: Galath3a